- Home

- About

- Contact

- Site to download need for speed most wanted full version

- Mac pro 4-core

- Auslogics boostspeed 10 key generator 2018

- Bf1 pc joystick sensitivity

- How to chage voice to text on mac

- How to make baking powder deodorant

- Dangerously in love download rar

- 1- describe the purpose and processing of captcha software-

- How to install jupyter notebook in windows

- Adobe premiere elements 2019 remove background noise

- How to install jupyter notebook in windows how to#

- How to install jupyter notebook in windows driver#

- How to install jupyter notebook in windows windows 10#

- How to install jupyter notebook in windows code#

- How to install jupyter notebook in windows license#

Run the executable, and JAVA by default will be installed in:

How to install jupyter notebook in windows license#

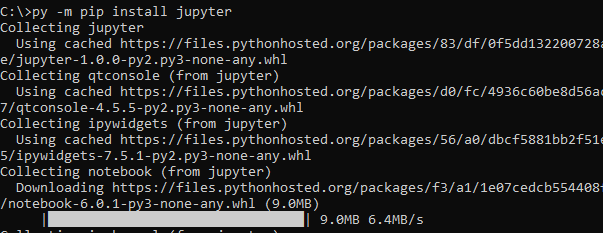

Go to Java?s official download website, accept Oracle license and download Java JDK 8, suitable to your system. Java version “1.8.0_144″Java(TM) SE Runtime Environment (build 1.8.0_144-b01)Java HotSpot(TM) Client VM (build 25.144-b01, mixed mode, sharing)Ĭheck the setup for environment variables: JAVA_HOME and PATH, as described below. Open cmd (windows command prompt), or anaconda prompt, from start menu and run: Install Java 8īefore you can start with spark and hadoop, you need to make sure you have java 8 installed, or to install it. I stole a trick from this article, that solved issues with file. It is used for running shell commands, and accessing local files. Another set of problems come from winutils.exe file,which an hadoop component for Windows OS. Most issues caused from improperly set environment variables, so be accurate about it and recheck. But since its fast evolving infrastructure, methods and versions are dynamic, and a lot of outdated and confusing materials out there. If you find the right guide, it can be a quick and painless installation. Spark can load data directly from disk, memory and other data storage technologies such as Amazon S3, Hadoop Distributed File System (HDFS), HBase, Cassandra and others. Spark ? Lightning-fast unified analytics engine Apache Spark and PySparkĪpache Spark is an analytics engine and parallel computation framework with Scala, Python and R interfaces. Here is a simple guide, on installation of Apache Spark with PySpark, alongside your anaconda, on your windows machine. PySpark interface to Spark is a good option. When you need to scale up your machine learning abilities, you will need a distributed computation.

How to install jupyter notebook in windows windows 10#

Fall back to Windows cmd if it happens.Spark ? 2.3.2, Hadoop ? 2.7, Python 3.6, Windows 10

How to install jupyter notebook in windows driver#

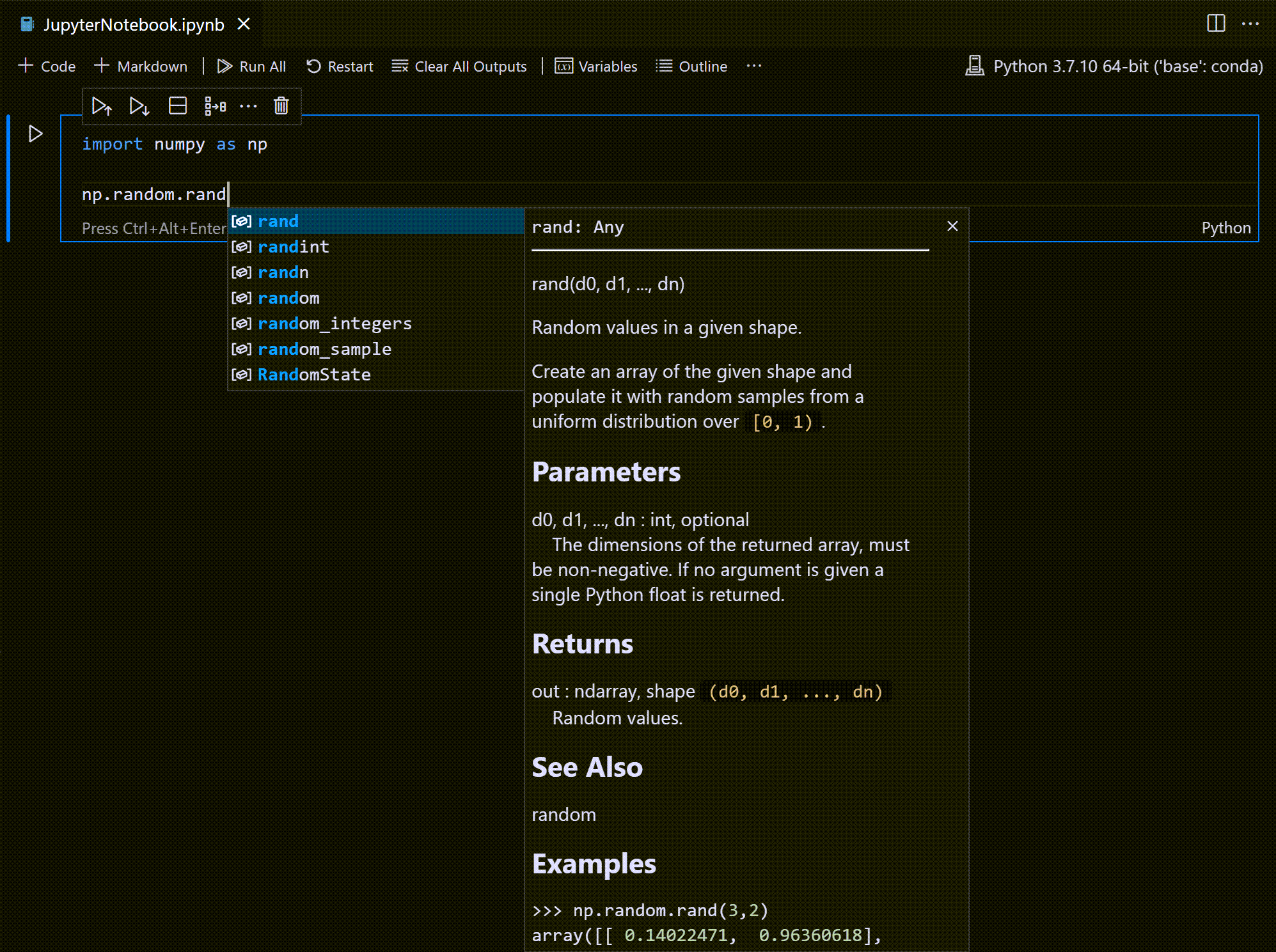

If you use Anaconda Navigator to open Jupyter Notebook instead, you might see a Java gateway process exited before sending the driver its port numberĮrror from PySpark in step C. To run Jupyter notebook, open Windows command prompt or Git Bash and run jupyter notebook. In my experience, this error only occurs in Windows 7, and I think it’s because Spark couldn’t parse the space in the folder name.Įdit (1/23/19): You might also find Gerard’s comment helpful:

If JDK is installed under \Program Files (x86), then replace the Progra~1 part by Progra~2 instead. (Optional, if see Java related error in step C) Find the installed Java JDK folder from step A5, for example, D:\Program Files\Java\jdk1.8.0_121, and add the following environment variable Name In Windows 7 you need to separate the values in Path with a semicolon between the values. In the same environment variable settings window, look for the Path or PATH variable, click edit and add D:\spark\spark-2.2.1-bin-hadoop2.7\bin to it. The variables to add are, in my example, Name You can find the environment variable settings by putting “environ…” in the search box. For example, D:\spark\spark-2.2.1-bin-hadoop2.7\bin\winutils.exeĪdd environment variables: the environment variables let Windows find where the files are when we start the PySpark kernel. Move the winutils.exe downloaded from step A3 to the \bin folder of Spark distribution. For example, I unpacked with 7zip from step A6 and put mine under D:\spark\spark-2.2.1-bin-hadoop2.7

tgz file from Spark distribution in item 1 by right-clicking on the file icon and select 7-zip > Extract Here.Īfter getting all the items in section A, let’s set up PySpark. tgz file on Windows, you can download and install 7-zip on Windows to unpack the. I recommend getting the latest JDK (current version 9.0.1). If you don’t have Java or your Java version is 7.x or less, download and install Java from Oracle.

You can find command prompt by searching cmd in the search box. The findspark Python module, which can be installed by running python -m pip install findspark either in Windows command prompt or Git bash if Python is installed in item 2. Go to the corresponding Hadoop version in the Spark distribution and find winutils.exe under /bin. Winutils.exe - a Hadoop binary for Windows - from Steve Loughran’s GitHub repo. You can get both by installing the Python 3.x version of Anaconda distribution. I’ve tested this guide on a dozen Windows 7 and 10 PCs in different languages.

How to install jupyter notebook in windows how to#

In this post, I will show you how to install and run PySpark locally in Jupyter Notebook on Windows.

How to install jupyter notebook in windows code#

When I write PySpark code, I use Jupyter notebook to test my code before submitting a job on the cluster.